Princeton PLACES

A human-centered building performance evaluation system for Princeton University

Client

My Focus

user experience

web-based prototype

Team

Princeton PLACES project team @ZGF Architects

Consultants: the Decision Lab, Daylight, Nitsch, Branchpattern

Time

Jun - Aug 2023

OVERVIEW

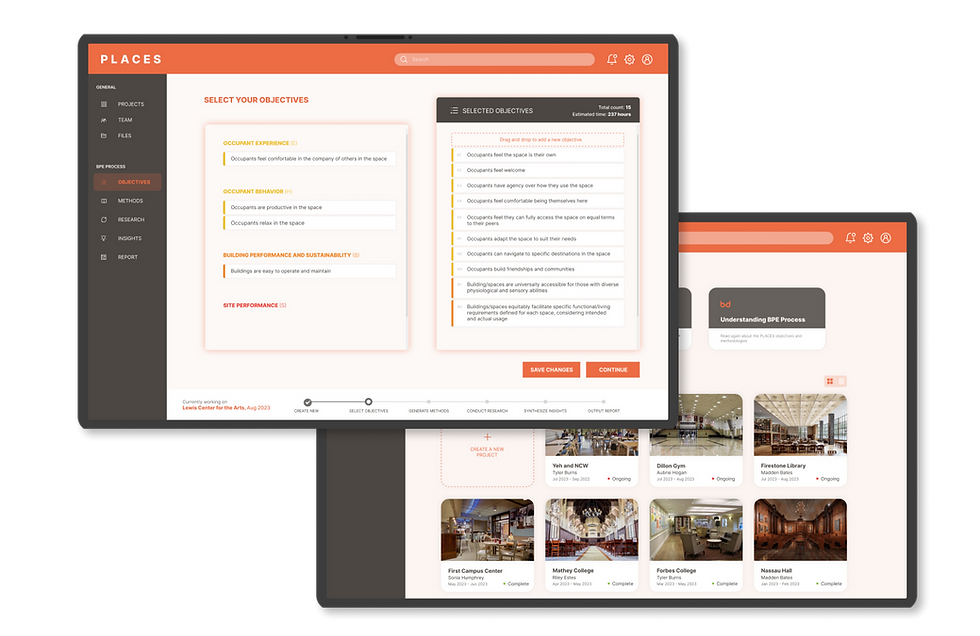

PLACES is a tool built for Princeton University to conduct building performance evaluation (BPE) on any of their campus buildings in the next few years.

Compared to traditional BPE processes, PLACES provides a more human-centered approach, leveraging occupant experience and behavior as crucial parts of the framework, involving qualitative user research methods in addition to quantitative data collection.

My role was to ideate, wireframe and prototype the tool into a web-based platform that would guide the future research team through the process. PLACES tool was expected to be handed over to Princeton and launched by the end of 2023.

OUTCOME

Start a New Evaluation Project

The PLACES tool experience starts with a dashboard for all BPE projects at Princeton, which can be toggled between list and cards view.

Researchers can start a new project from here.

Select Evaluation Objectives

The PLACES tool comes with 4 categories of evaluation objectives:

- occupant experience (E)

- occupant behavior (H)

- building performance & sustainability (B)

- site performance (S)

From this pool, researchers can choose a subset of objectives to include in their new evaluation, based on factors such as building age and function.

Generate Research Methods

Given the customized objective list, the PLACES tool auto-generates a set of methodologies for researchers. Each methodology indicates the objectives it attempts to evaluate and the estimated implementation time.

Researchers have the freedom to edit the specifics within each method.

Synthesize Insights

Researchers reflect on the information they gather, synthesize and input insights and observations on the PLACES platform.

Observations are paired with corresponding objectives. Researchers can also link evidence (survey responses, checklist results, interview quotes...) for each observation.

PROCESS

project timeline (planned)

Ideation and Research

In collaboration with our consultants, our team first "unearthed" an initial list of evaluation objectives.

To validate them, we went to the Princeton campus and conducted in-person engagement activities with students and faculty.

pop-up engagement activity

campus "love letter" activity

example site visit

Engagement activities helped us determine what aspects of the campus buildings are important to the Princeton community, and what are the proper methodologies for evaluation.

on-campus survey flyer

research data clean-up

working Miro board

engagement week schedule

Observations and Analysis

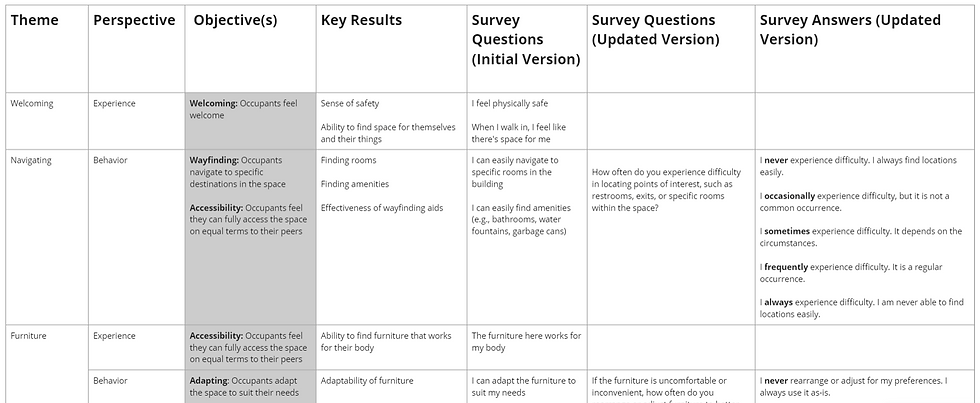

After rounds of consultants and client meetings, we finally assembled, categorized, and mapped the set of objectives as shown below.

Along with this, we planned out possible methodologies and began to pair them up with objectives.

a close-up snapshot of survey question list (WIP) generated together with Branchpattern

methodologies overview

Client's Painpoint

Princeton committee's biggest paintpoint was the fear of being overwhelmed by a huge, complex tool, and being left unclear of how to conduct the evaluation.

As the UX designer, I needed to address our client's concern:

I advocated for a web-based tool as the final deployment platform. I broke down the process in a user-friendly way that would guide researchers step by step, giving them assurance of what to do next.

Prototyping

breaking down the tool goals

UX wireframe

frames